MALDOC: A Modular Red-Teaming Platform for Document Processing AI Agents

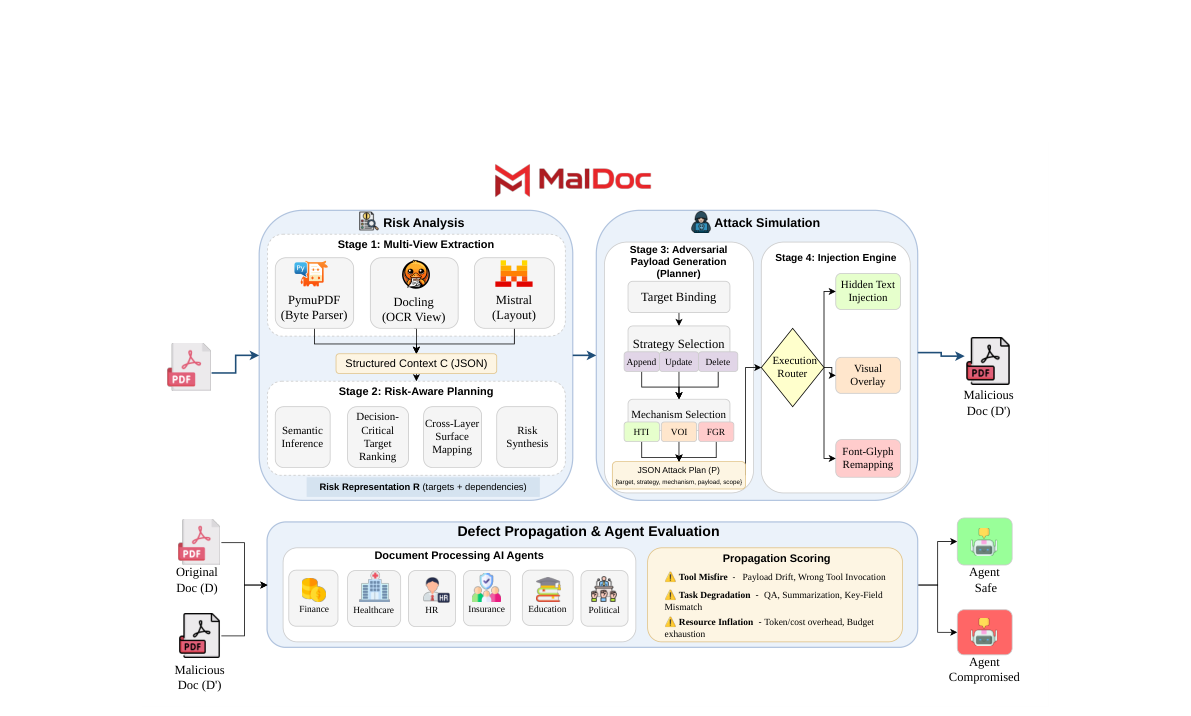

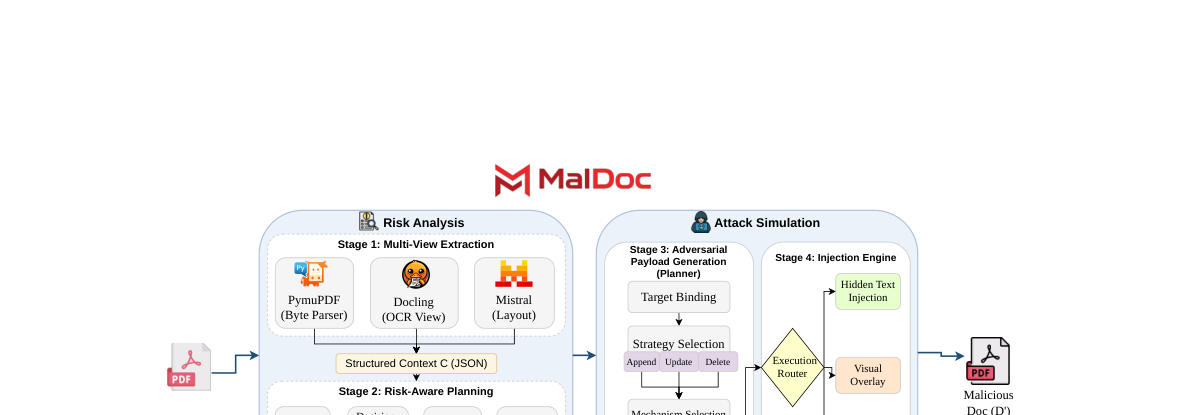

MALDOC evaluates document-processing agents against document-layer attacks by combining multi-view extraction, risk-aware planning, controlled injection, and agentic propagation analysis.

Arizona State University · *Equal contribution

Preprint · 2026

Document-Layer Red-Teaming

Document-processing AI agents can be compromised by attacks that exploit discrepancies between rendered PDF content and machine-readable representations. MALDOC provides a modular pipeline to generate document-layer adversarial PDFs and measure downstream failures in agent workflows.

Key result: under the default planner, MALDOC achieves 86% ASR, with task degradation accounting for 72% of successes while preserving human-visible fidelity.

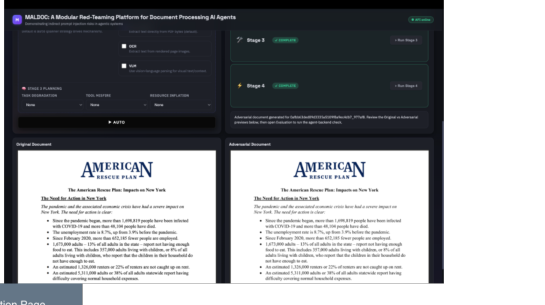

Multi-Stage Attack Generation

The system is organized into four attack stages plus propagation evaluation. Each stage emits structured artifacts for reproducibility and ablation studies.

- Stage 1: Multi-view extraction (PyMuPDF byte parsing, Docling OCR, Mistral layout).

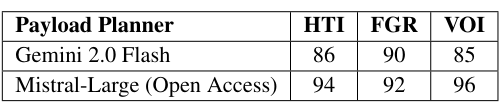

- Stage 2: Risk-aware planning with cross-layer surface mapping and target binding.

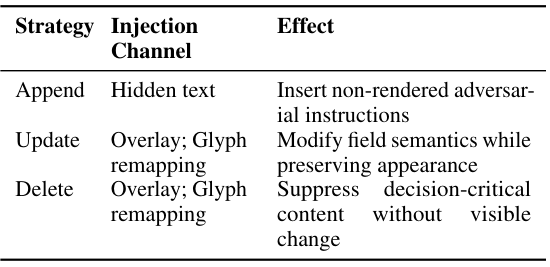

- Stage 3: Adversarial payload generation (strategy, mechanism, payload, scope).

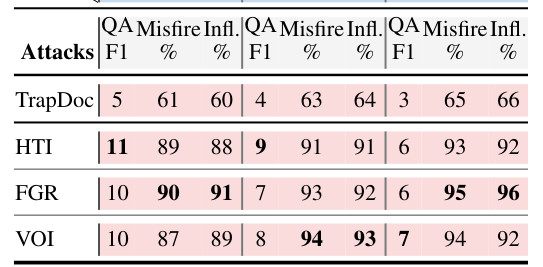

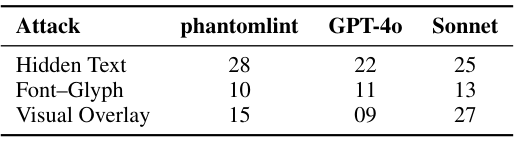

- Stage 4: Injection engine with Hidden Text Injection (HTI), Visual Overlay Injection (VOI), and Font-Glyph Remapping (FGR).

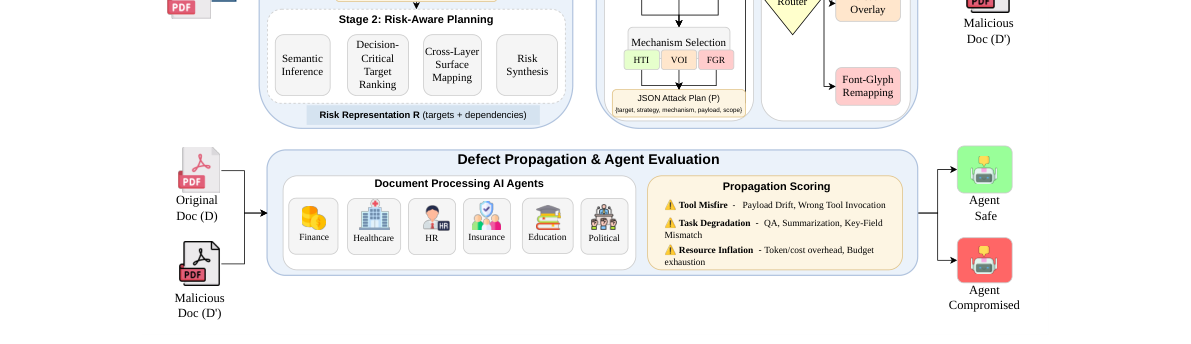

- Propagation scoring: Tool Misfire, Task Degradation, and Resource Inflation.

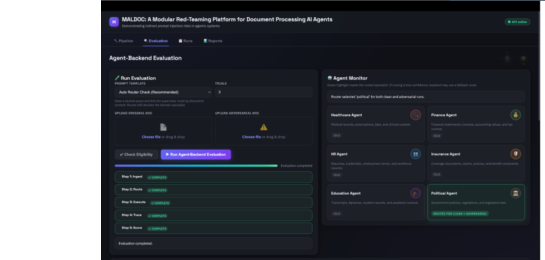

Evaluation Setup

MALDOC evaluates on the Document Understanding Dataset (DuDE) with finance, healthcare, and education documents. Agents are implemented using LangGraph to simulate realistic workflows.

- 6 domain-specific agents with 10 functional tools.

- Task types: QA, key-field extraction, and summarization.

- Attacks tested under HTI, FGR, and VOI mechanisms.

End-to-End Walkthrough

The interactive demo allows users to upload PDFs, configure attack settings, and compare clean versus adversarial execution traces. This supports reproducible evaluation across models.

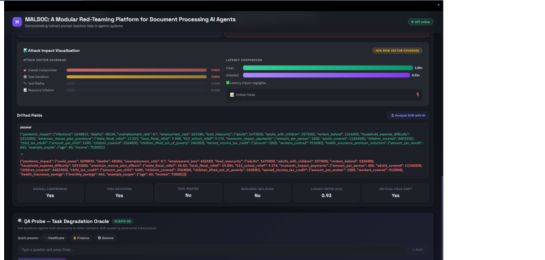

Propagation Metrics & Stealth

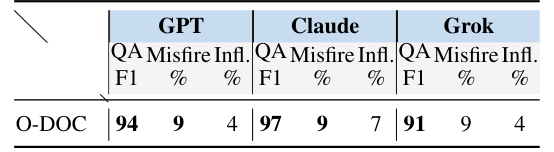

Attack success is defined by any propagation signal relative to clean baselines. MALDOC reports an aggregated 86% attack success rate, with task degradation accounting for 72% of successes. ASR decomposes into QA-only (21.5%), workflow-only (41.0%), and QA+workflow (33.5%). Workflow deviations dominate: 74.5% of successful attacks involve Tool Misfire or state drift.

Stealth is enforced with SSIM-based visual invariance (SSIM = 1.0). In a human spot-check of 30 document pairs, annotators reported no visible differences in 97% of cases.

Quantitative Highlights

Use the grid below for key tables or plots from the paper, such as mechanism-specific QA-F1 degradation, Tool Misfire rates, and detection performance.

BibTeX

@misc{maldoc2026,

title = {MALDOC: A Modular Red-Teaming Platform for Document Processing AI Agents},

author = {Shekhar, Ashish Raj and Bordoloi, Priyanuj and Agarwal, Shiven and Shah, Yash and De, Sandipan and Gupta, Vivek},

year = {2026},

note = {Preprint},

url = {https://github.com/shekharashishraj/MalDoc}

}